In the era of containers (the “Docker Age“) Java still keeps alive, being struggling for it or not. Java has always been (in)famous regarding its performance, most of because of the abstraction layers between the code and the real machine, the cost of being multi-platform (write once, run anywhere – remember this?), with a JVM in-between (JVM: software machine that simulates what a real machine does). Nowadays, with the Microservice Architecture, perhaps it does not make sense anymore, nor any advantage, build something multi-platform (interpreted) for something that will always run on the same place and platform (the Docker Container – Linux environment). So, portability is now less relevant (maybe more than ever), those extra level of abstraction is not important.

So, having said that, let’s perform a simple and raw comparison between two alternatives to generate Microservices in Java: the very well-known Spring Boot and the not so very well-know (yet) Quarkus.

Opponents

Who is Quarkus?

An open-source set of technologies adapted to GraalVM and HotSpot to write Java applications. It offers (promise) a super-fast startup time and a lower memory footprint. This makes it ideal for containers and serverless workloads. It uses the Eclipse Microprofile (JAX-RS, CDI, JSON-P), a subset of Java EE to build Microservices.

An open-source set of technologies adapted to GraalVM and HotSpot to write Java applications. It offers (promise) a super-fast startup time and a lower memory footprint. This makes it ideal for containers and serverless workloads. It uses the Eclipse Microprofile (JAX-RS, CDI, JSON-P), a subset of Java EE to build Microservices.

GraalVM is a universal and polyglot virtual machine (JavaScript, Python, Ruby, R, Java, Scala, Kotlin). The GraalVM (specifically Substrate VM) makes possible the ahead-of-time (AOT) compilation, converting the bytecode into native machine code, resulting in a binary that can be executed natively.

Bear in mind that not every feature are available in native execution, the AOT compilation has its limitations. Pay attention at this sentence (quoting GraalVM team):

“We run an aggressive static analysis that requires a closed-world assumption, which means that all classes and all bytecodes that are reachable at runtime must be known at build time”.

So, for instance, Reflection and Java Native Interface (JNI) won’t work, at least out-of-the-box (requires some extra work). You can find a list of restrictions at here Native Image Java Limitations document.

Who is Spring Boot?

Really? Well, just to say something (feel free to skip it), in one sentence: built on top of Spring Framework, Spring Boot is an open-source framework that offers a much simpler way to build, configure and run Java web-based applications. Making of it a good candidate for microservices.

Really? Well, just to say something (feel free to skip it), in one sentence: built on top of Spring Framework, Spring Boot is an open-source framework that offers a much simpler way to build, configure and run Java web-based applications. Making of it a good candidate for microservices.

Battle Preparations – Creating the Docker Images

Quarkus Image

Let’s create the Quarkus application to wrap it later in a Docker Image. Basically, we will do the same thing that the Quarkus Getting Started tutorial does.

Creating the project with the Quarkus maven archetype:

mvn io.quarkus:quarkus-maven-plugin:1.0.0.CR2:create \

-DprojectGroupId=ujr.combat.quarkus \

-DprojectArtifactId=quarkus-echo \

-DclassName="ujr.combat.quarkus.EchoResource" \

-Dpath="/echo"

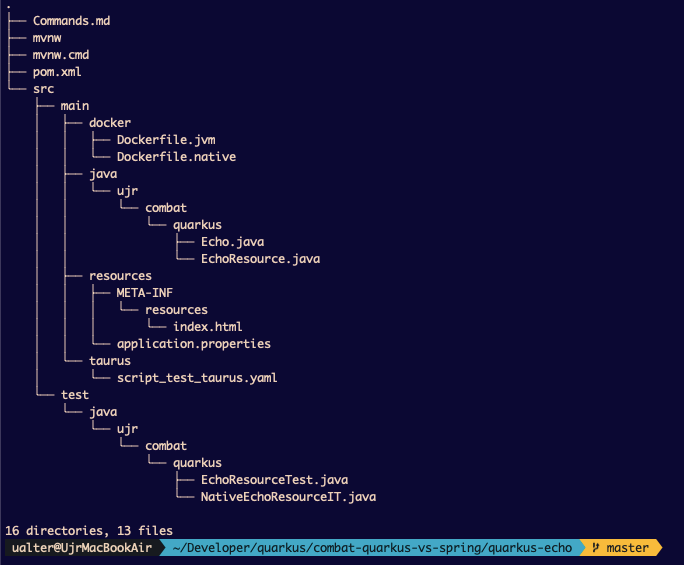

This will result in the structure of our project, like this:

Notice that was also created the two sample Dockerfiles (src/main/docker): one for an ordinary JVM App Image and another for Native App Image.

At the generated code, we have to change just one thing, add the dependency below because we want to generate JSON content.

<dependency> <groupId>io.quarkus</groupId> <artifactId>quarkus-resteasy-jsonb</artifactId> </dependency>

The Quarkus uses a JAX-RS specification throughout the RESTEasy project implementation.

Here’s our “entire” application:

mvn clean compile quarkus:dev

In this mode, we also enable the hot deployment, with background compilation. Let’s make a simple test to see it:

curl -sw "\n\n" http://localhost:8080/echo/ualter | jq .

Now that we saw that it is working, let’s create the Docker Image. Download the GraalVM from here: https://github.com/graalvm/graalvm-ce-builds/releases

Important! Do not download the last version 19.3.0, the Quarkus 1.0 it is not compatible with it, perhaps Quarkus 1.1 will be. Right now the version that should work is GraalVM 19.2.1, get this one.

Configure its environment variable home path:

## At macOS will be: export GRAALVM_HOME=/Users/ualter/Developer/quarkus/graalvm-ce-java8-19.2.1/Contents/Home/

And, then install the Native Image for the GraalVM in your environment:

$GRAALVM_HOME/bin/gu install native-image

Let’s generate the native version for the current platform (in this case will be generated a native executable file for macOS).

mvn package -Pnative

If everything works fine, we can find a file named quarkus-echo-1.0-SNAPSHOT-runner inside the ./target folder. This is the executable binary of your App, and you could just start it running this command: ./target/quarkus-echo-1.0-SNAPSHOT-runner. No need to use the JVM (the ordinary: java -cp app:lib/*:etc App.jar), it is a native executable binary.

Let’s generate a Native Docker Image for our application. This command will create a Native image, that is, a Docker Image with a Linux native executable application. By default, the native executable is created based on the current platform (macOS), as we are aware that this resulted executable it is not the same platform that will be the container (Linux), we will instruct Maven build to generate an executable from inside a container, generating a native docker image:

mvn package -Pnative -Dquarkus.native.container-build=true

At this point, be sure to have a Docker container runtime, a working environment.

The file will be a 64 bit Linux executable, so naturally, this binary won’t work on our macOS, it was built for our docker container image. So, moving forward… let’s go for the docker image generation…

docker build -t ujr/quarkus-echo -f src/main/docker/Dockerfile.native . ## Testing it... docker run -i --name quarkus-echo --rm -p 8081:8081 ujr/quarkus-echo

The final docker image was 115MB, but you can have a tiny Docker image using a distroless image version. Distroless images contain only your application and its runtime dependencies, everything else (package managers, shells or ordinary programs commonly find in a standard Linux distribution) is removed. A Distroless image of our application has a size of 42.3MB. The file ./src/main/docker/Dockerfile.native-distroless has the receipt to produce it.

About Distroless Images: “Restricting what’s in your runtime container to precisely what’s necessary for your app is a best practice employed by Google and other tech giants that have used containers in production for many years“

Spring Boot Image

At this point, probably everyone knows how to produce an ordinary Spring Boot Docker image, let’s skip the details, right? Just one important observation, the code is exactly the same. Better saying, almost the same, because we are using Spring framework annotations, of course. That’s the only difference. You can check every detail in the provided source code (link down below).

mvn install dockerfile:build ## Testing it... docker run --name springboot-echo --rm -p 8082:8082 ujr/springboot-echo

The Battle

Let’s launch both containers, get them up and running a couple of times, and compare the Startup Time and the Memory Footprint.

Let’s launch both containers, get them up and running a couple of times, and compare the Startup Time and the Memory Footprint.

In this process, each one of the containers was created and destroyed 10 times. Later on, it was analyzed their time to start and its memory footprint. The numbers shown below are the average results based on all those tests.

Startup Time

Obviously, this aspect might play an important role when related to Scalability and Serverless Architecture.

Regarding Serverless architecture, in this model, normally an ephemeral container will be triggered by an event to perform a task/function. In Cloud environments, the price usually is based on the number of executions instead of some previous purchased compute capacity. So, here the cold start could impact this type of solution, as the container (normally) would be alive only for the time to execute its task.

In Scalability, it is clear that if it’s necessary to suddenly scale out, the startup time will define how long it will take until your containers to be completely ready (up and running) to answer the presented loading scenario.

How much more sudden it is the scenario (needed to Scale out and Scale in fast), worse can be the case with long cold starts.

Let’s see how they performed regarding startup time:

Well, you may have noticed that it is one more option tested inserted in the Startup Time graph. Actually, it is exactly the same Quarkus application but generated with a JVM Docker Image (using the Dockerfile.jvm). As we can see even the application that it is using a Docker Image with JVM Quarkus application has a faster Startup Time than Spring Boot.

Needless to say, and obviously the winner, the Quarkus Native application it is by far the fastest of them all to start it up.

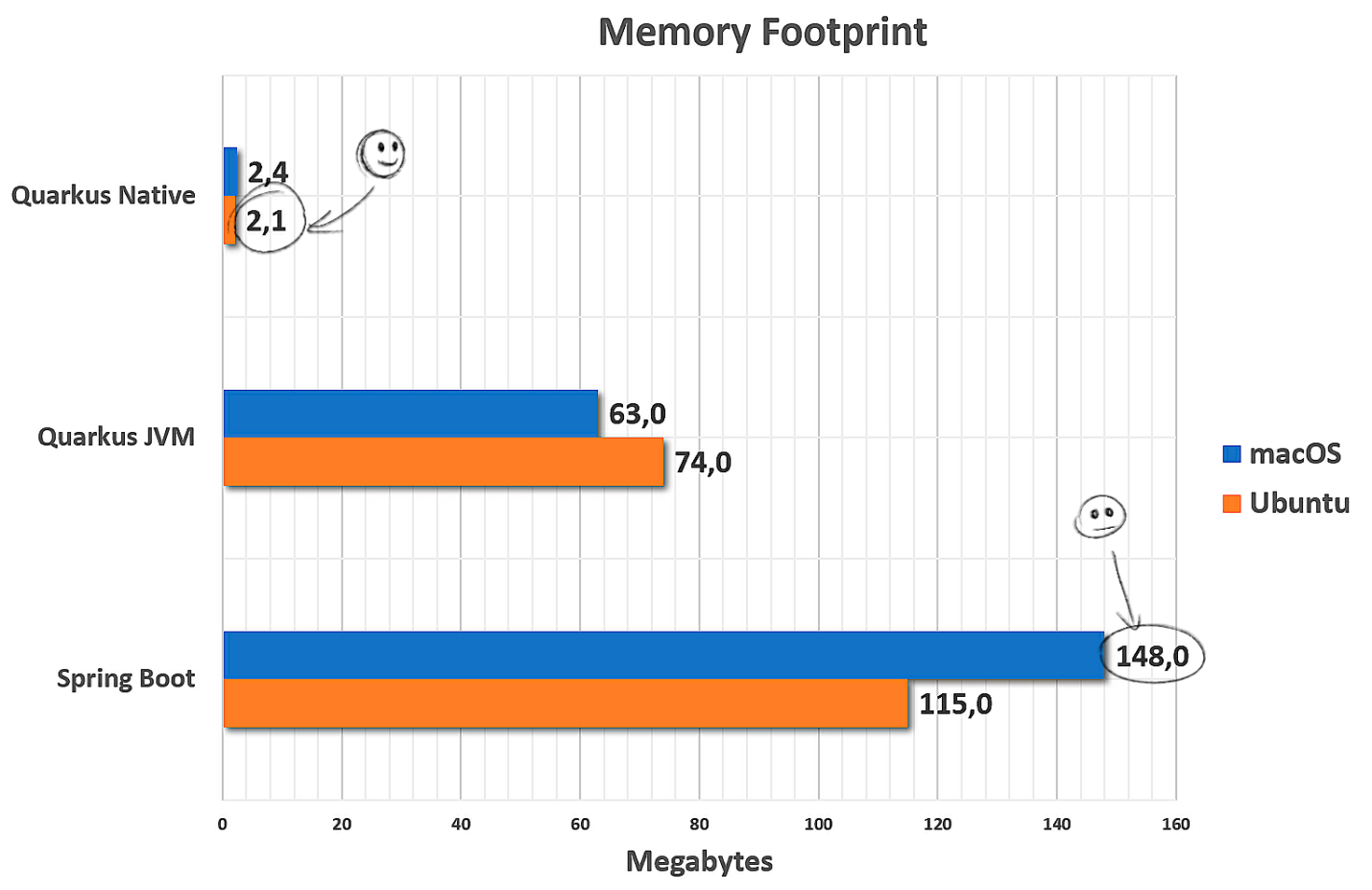

Memory Footprint

Now, let’s check how the things went with memory. Checking how much memory each container application needs to consume at its start, to get itself up and running, ready to receive requests.

Conclusion

To sum up all the thing in one vision only, this is what we’ve got looking at the results in Linux Ubuntu:

It seems that Quarkus won these two rounds fight (Startup Time and Memory Footprint), overcoming his opponent SpringBoot with some clear advantage.

This could make us wondering… perhaps it’s time to think about some real Labs, experiences, and some try on with Quarkus. We should see how it performs in real life, how it fits in our business scenario, and in what would be most useful for.

But, let’s not forget the cons, as we’ve seen above some features of JVM could not work (yet/easily) in native executable binaries. Anyway, probably it’s time to give Quarkus a chance to prove himself, especially if the problem of Cold Start it’s been bothering you. How about getting to work one or two Pods (K8s) powered with Quarkus in the environment, it will be interesting to see how it performs after a while, wouldn’t be?

Source code: https://github.com/ualter/quarkus/tree/master/combat-quarkus-vs-spring